VISC Center, Purdue University, Indianapolis, USA Driving Video

Profile Dataset (DVP)

|

Driving Video Profile Dataset, Update 8/25/2022 Introduction |

Sample |

|||||||||||||||||||||||||||||||

|

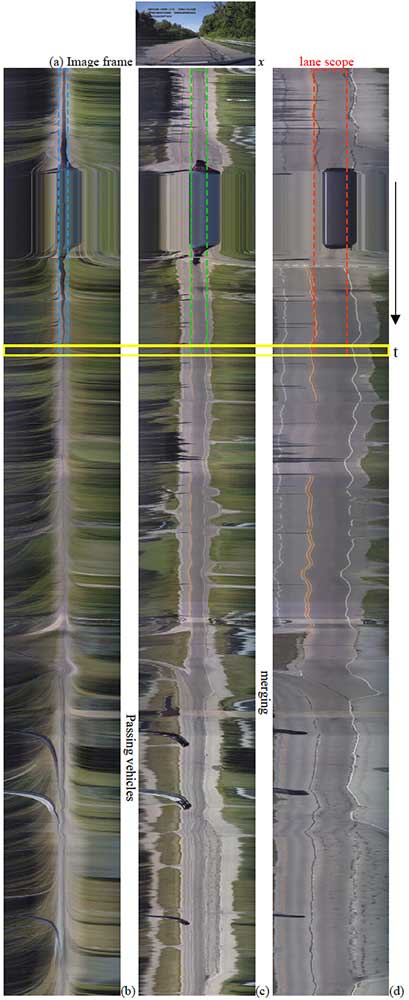

From a driving video, a road

profile and motion profile are extracted from a depth as spatial-temporal

images, in which road environment is displayed in a deformed 2D map and

dynamic objects show their trajectories [2]. With the temporal association

and dependency presented in the profiles, the road at the depth can be

located for vehicle path planning [5]. The vehicles as well as obstacles up

to that depth can be detected for braking to avoid collision. This dataset provides driving

video, road profile, and motion profile images for machine learning. The road

profiles are annotated pixel-wise in classes of road, off-road, vehicle,

pedestrian, vertical obstacle, lane mark, as well as slow driving periods

such as ego-vehicle stopping and sharp turning. Figure 1 shows an example of

such profile images. The road

profile (RP) data has been annotated pixel-wise for semantic segmentation.

Each driving video is sampled with horizontal lines at three depths ranging

from close, mid, to far. Three road profile images

and associated motion profiles are obtained through temporal scanning at the

lines. |

Video frame (a) at top and the road profiles from (b) far, (c) mid, and (d) close depths. Contributors Contributors:

Guo Cheng, Jiang Yu Zheng The credit

also gives to Dr. Yaobin Chen, Dr. Ranran Tian in TASI, IUPUI for providing source videos in

generating Road/Motion Profiles. Contact: jzheng@cs.iupui.edu (Jiang Yu Zheng) . |

|||||||||||||||||||||||||||||||

|

Dataset |

||||||||||||||||||||||||||||||||

|

Table I Road Profile Images, Motion

Profile Images, and Labeled Ground Truth from 60 driving videos

Table II Class colors in labeled

ground truth

Downloading

dataset for training and testing PNG images in a zipped file for training and testing. For

visualization, jpg images and videos are included as well for review, and HD

videos are downsized to 1/16th.

A readme.txt file indicates the categories of weather and

illumination. The heights of scanning lines for obtaining close, mid, and far

road profiles are 130, 80, 30 pixels respectively bellowed the horizon in the

video frames. Open Semantic Segmentation Code GitHub: https://github.com/BrookPurdueUniversity/Temporal-Shift-Memory Note: Including network in Patch-mode (trained and

inference in batch-mode), Naïve shift-mode (inference latest line

immediately), and TSM (minimum memory for latest line without latency). The

profiles are flipped in time dimension so that the time axis is upward for

visualization (different from the sample on the right of this paper). Duo to the zero padding added in TensorFlow

convolution on large (lower/right) side (i.e., a limitation of TensorFlow),

the TSM in Tensorflow will damage the data of the

latest input and thus ruin the entire segmentation accuracy. For this reason,

this profile flipping in the time domain will guarantee the outcome as

proposed network model in the paper [0]. This also allows the network to

learn the temporal order of data such that the later input is streamed into

the network from the small side. The

profile scanning outputs the latest result on small side of the network

including TSM, when the network shifts from bottom to top of the road profile

line by line. References Road profile semantic segmentation [0] G. Cheng, J. Y. Zheng,

“Sequential Semantic Segmentation of Road Profiles for Path and Speed

Planning”, IEEE Transaction on Intelligent Transportation Systems, pp.

1-14, 2022. [1] G. Cheng, J. Y. Zheng and M. Kilicarslan,

"Semantic Segmentation of Road Profiles for Efficient Sensing in

Autonomous Driving," 2019

IEEE Intelligent Vehicles Symposium (IV), 2019, pp. 564-569, doi: 10.1109/IVS.2019.8814259. Pedestrian

semantic segmentation in motion profiles [2] G. Cheng and J. Y. Zheng, "Semantic Segmentation for

Pedestrian Detection from Motion in Temporal Domain," 2020 25th

International Conference on Pattern Recognition (ICPR), 2021, pp. 6897-6903, doi: 10.1109/ICPR48806.2021.9411958. Vehicle

interactions in motion profiles [3] Z. Wang, J. Y. Zheng and Z. Gao, "Detecting Vehicle

Interactions in Driving Videos via Motion Profiles," 2020 IEEE

23rd International Conference on Intelligent Transportation Systems (ITSC), 2020, pp. 1-6, doi:

10.1109/ITSC45102.2020.9294617. [4]

M. Kilicarslan and J. Y. Zheng, "Predict

Vehicle Collision by TTC From Motion Using a Single Video Camera," in IEEE

Transactions on Intelligent Transportation Systems, vol. 20, no. 2, pp. 522-533, Feb. 2019, doi:

10.1109/TITS.2018.2819827. Weather

and illumination reflected in road profiles [5] Z. Wang, G. Cheng and J. Y. Zheng, "Road Edge Detection

in All Weather and Illumination via Driving Video Mining," in IEEE

Transactions on Intelligent Vehicles, vol. 4, no. 2, pp. 232-243,

June 2019, doi: 10.1109/TIV.2019.2904382. [6] G. Cheng, Z. Wang and J. Y. Zheng, "Modeling Weather

and Illuminations in Driving Views Based on Big-Video Mining," in IEEE

Transactions on Intelligent Vehicles, vol. 3, no. 4, pp. 522-533,

Dec. 2018, doi: 10.1109/TIV.2018.2873920. Initial

video profiles [7] M. Kilicarslan and J. Y. Zheng,

"Visualizing driving video in temporal profile," 2014 IEEE

Intelligent Vehicles Symposium Proceedings, 2014, pp. 1263-1269, doi: 10.1109/IVS.2014.6856420 |